Projects

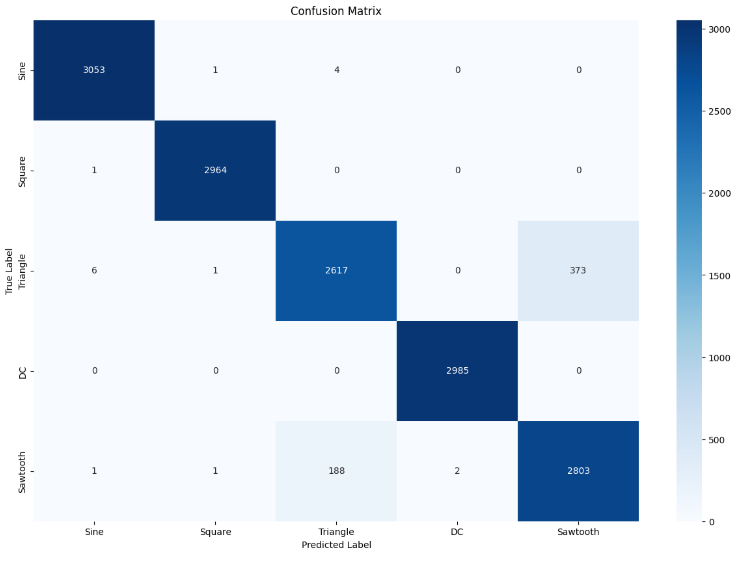

FPGA-Based Signal Implementation(2025)

I contributed to building a real-time analog signal classification system using machine learning and FPGA-based signal processing. The objective was to implement a Random Forest classifier capable of processing noisy, complex analog signals in hardware. I designed and simulated Verilog testbenches to verify individual signal processing modules, including ADC simulation and feature extraction and streamlined algorithms to reduce computational overhead for real-time responsiveness. The project involved converting analog signals into digital form, extracting relevant features (such as RMS, entropy, and spectral metrics), and integrating the classification logic onto an FPGA. While we faced notable challenges—including fixed-point arithmetic issues, on-chip memory limitations, and the complexity of implementing ML pipelines in hardware—the experience deepened my skills in functional verification, hardware-software co-design, and machine learning on reconfigurable platforms.

Ongoing FPGA-Processor Communication Project

During a recent project focused on optimizing FPGA-processor communication, I engineered a high-speed data exchange framework using PolarFire FPGA and LSRAM architecture. The goal was to improve data throughput and reduce system latency in a real-time embedded environment. To further enhance system performance, I addressed communication bottlenecks that were affecting the Linux-based interface layer. Through targeted timing optimization and protocol tuning, I reduced latency by 10%, enabling smooth Linux image integration with the FPGA hardware. In parallel, I implemented CNN acceleration on the FPGA to support real-time AI inference, optimizing resource allocation and clock cycles. The project culminated with a comprehensive functional validation of the FPGA-processor interaction, ensuring stable and high-performance communication across the system.

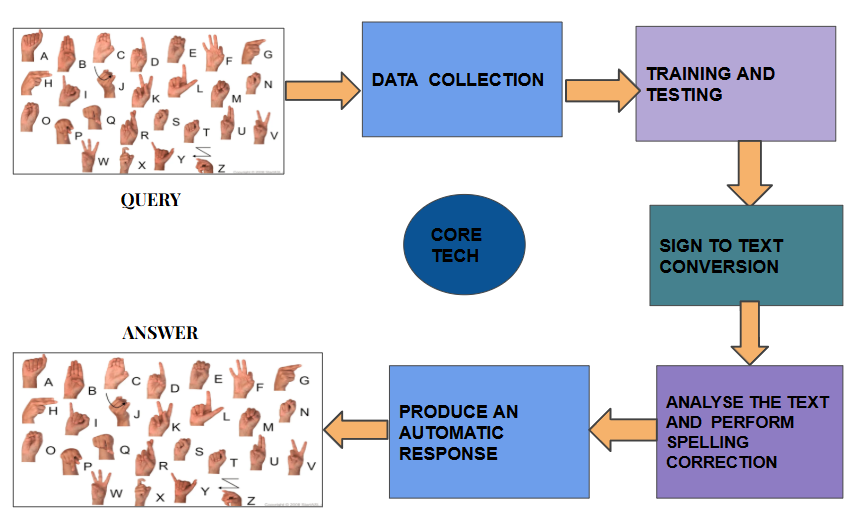

Sign-Kiosk: A Real-Time Virtual Assistant

I developed an interactive kiosk system that enables two-way communication between hearing or speech-impaired users and others without the need for an interpreter. The system detects American Sign Language (ASL) gestures in real time, generates contextual responses, and delivers them back as sign videos or speech output. For sign recognition, I implemented landmark-based gesture tracking using MediaPipe Holistic, extracting 3D coordinates of 21 hand joints to classify ASL alphabets. I trained machine learning models including KNN, SVM, and Random Forest, and designed the dataset collection process to handle varied hand shapes and orientations. By using coordinate-based features instead of bulky image datasets, the system became more memory-efficient and privacy-friendly. To generate responses, I integrated an NLP pipeline that combined the Spello model for spelling correction with the RoBERTa Transformer for question answering. This allowed the system to provide context-aware and grammatically correct responses. For output, I created a video concatenation engine in MoviePy to assemble pre-recorded sign clips into complete sentences, and implemented a text-to-speech module using phoneme modeling, prosody control, and Mel-spectrogram processing to produce natural, intelligible speech. This project brought together computer vision, machine learning, and natural language processing to create a scalable communication aid that can be deployed in public spaces, healthcare facilities, and service environments. As an example, we had used our college database for training.

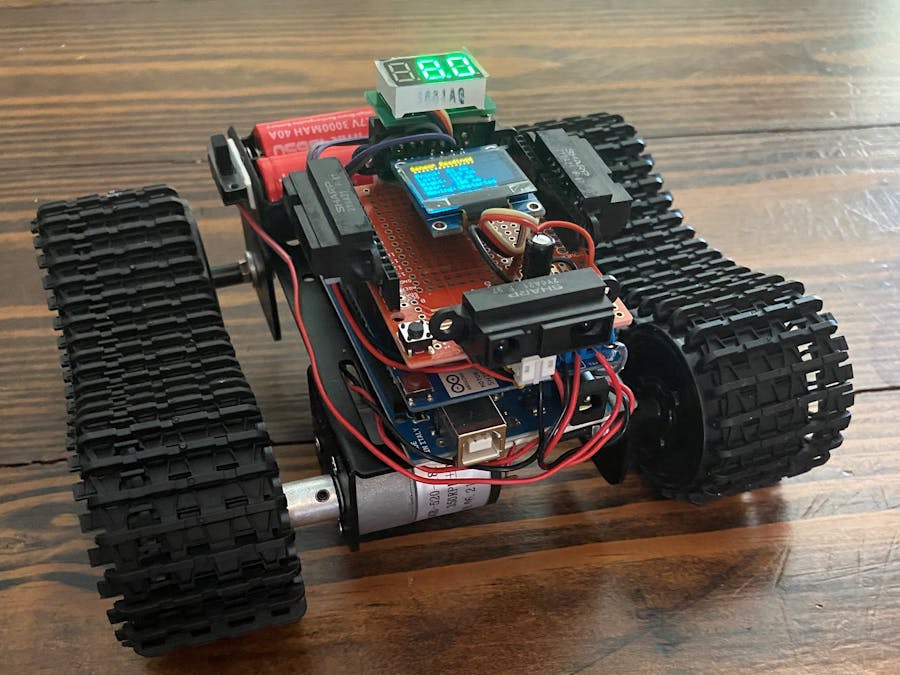

Human Following Robot

I designed and built a human-following robot capable of detecting and tracking a person within a defined range using infrared (IR) sensors. The project combined Arduino-based control with DC motor actuation to enable autonomous navigation without direct manual input. For human detection, IR sensors were placed on either side of the robot to emit and detect reflected infrared signals. The readings determined the relative position of a person, allowing the robot to adjust its path accordingly. The Arduino processed these inputs in real time, controlling the left and right motors to move forward, turn left, or turn right, depending on the detected position. I programmed the control logic to handle different scenarios: stopping when no object was detected, adjusting direction when the target was off-center, and maintaining forward motion when both sensors detected the human within range. This project demonstrated the integration of sensor-based navigation, microcontroller programming, and motor control systems, and provided a foundation for more advanced applications in robotics such as obstacle avoidance and autonomous service robots.

Signal Generation and Plotting on PyQT GUI

I developed a PyQT-based graphical user interface (GUI) for generating, acquiring, and visualizing signals in real time. The interface included a built-in Fast Fourier Transform (FFT) module to convert time-domain signals into their frequency-domain representation, allowing for real-time spectral analysis. The GUI displayed live waveform plots alongside FFT spectra, enabling faster interpretation of signal behavior. I optimized the signal acquisition pipeline to ensure minimal latency between capture and display, improving its usability for time-sensitive applications. Signal processing routines were streamlined to handle higher sampling rates without performance drops, making the tool suitable for real-time monitoring and analysis. This project combined signal processing algorithms, PyQT interface design, and performance optimization to deliver an interactive and efficient tool for real-time signal visualization.